Open-sourcing Octofriend, a coding agent that works with GPT-5, Claude, and open LLMs

We're launching Octofriend, an open-source coding agent that works with GPT-5, Claude, and open-source (and even local) LLMs like GLM-4.5 and GPT-OSS-120B. Octo runs in your terminal: it's like Claude Code, but it works with pretty much any LLM.

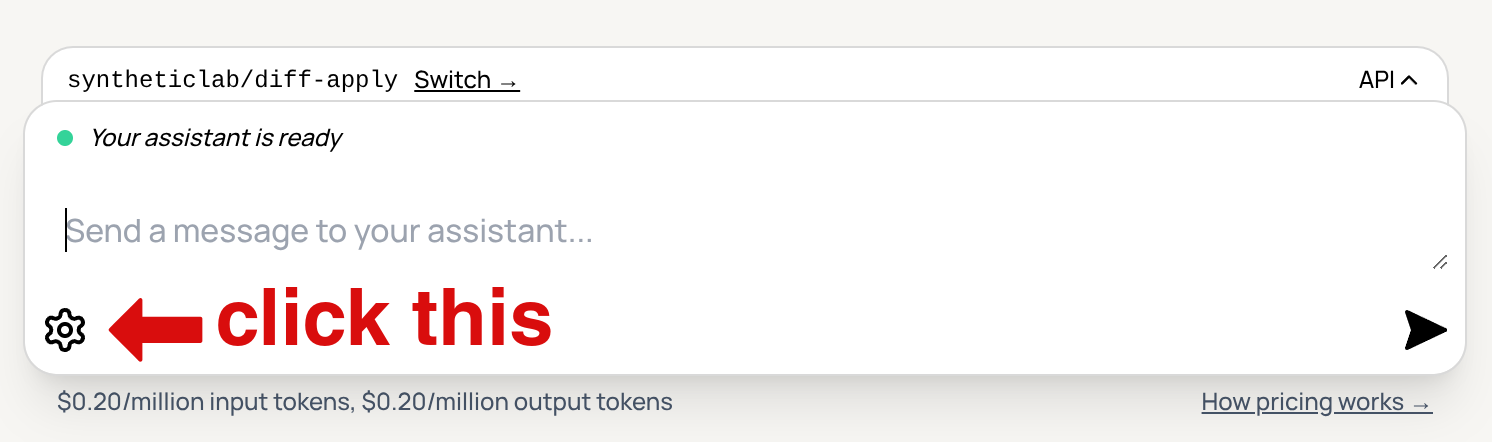

Octo has two optional custom-trained models that automatically fix minor diff edit or JSON encoding errors that even very good coding models sometimes run into. Using the autofix models is usually faster and cheaper than retrying the large coding models, and helps reduce the large models' confusion. Octo can use these autofix models with any LLM! Naturally, we're open-sourcing the autofix models we trained... Including down to the training pipelines themselves.

Octo works especially well with reasoning models. Many coding agents struggle with correctly handling reasoning tokens, especially encrypted ones from OpenAI and Anthropic's APIs. Octo handles those tokens carefully, and we think you'll notice how much smarter it is as a result.

We've been busy for the past few months: we've also shipped improvements to the main Synthetic site, like new model support (including some excellent coding models you can use with Octo), and a free trial.

Octofriend

We're open-sourcing Octofriend, the cute terminal coding agent we've been working on for the past couple of months. Octo works great with GPT-5 and Claude 4, and, of course, we've also made sure it works great with open models we host on Synthetic like zai-org/GLM-4.5 and moonshotai/Kimi-K2.

Octo is sort of like Claude Code, except that it works with just about any model in existence — even LLMs run locally on your own machine. It also has two optional helper models we trained, which automatically fix minor diff edit inaccuracies and JSON encoding errors that even very good coding models sometimes make mistakes on. If you're familiar with the , you'll recognize that even the top coding models sometimes fail to solve problems due to edit format inaccuracies. Octo should run into far fewer of those problems, because of the autofix models we trained. This helps in a few ways: